Welcome to this edition of Ctrl+Alt+Deploy 🚀

I’m Lauro Müller and super happy to have you around 🙂 Let’s dive in right away!

In the last few days all I could see on LinkedIn was AWS, AWS, AWS… One region goes down, half the world goes down. Everybody talks about what caused it; very few talk about how not to be affected by it. “This incident only shows how reliant on AWS and the cloud companies are!” is what I mostly read. But in between the lines, I’d risk saying that what this incident surfaced was not overreliance on AWS, but rather how unprepared most companies are for this kind of situation. A cloud region is bound to eventually go down, so what matters is how well-prepared you and your systems are to deal with the disruption. Disaster recovery is not straightforward, yes, and the consequence is that it ends up being pushed back as new features (ooh, the new features) take priority. However, those who had an effective, clear, and tested disaster recovery plan were able to respond to the incident systematically and had their systems recover much faster than the AWS region itself.

So in this article, we’re gonna dive deeper into the four main disaster recovery strategies, how they differ from each other, what are the pros and cons of each, the trade-offs between them, and when to use each. It’s a lot of ground to cover, so let’s get started!

Learn Terraform and Bring Your AWS Infrastructure to the Next Level

If you’ve been around for a while, you know how much of a fan I am of declarative stuff. Infra as Code, GitOps, you name it. The AWS incident in the last days only shows how important it is to have your infrastructure well defined and clearly structured, so that you can effectively manage to deliver resiliency and stability to your applications. So make sure to check it out, and here is a link with as much discount as I can give 😊

The Two Knobs to Turn: Understanding RTO & RPO

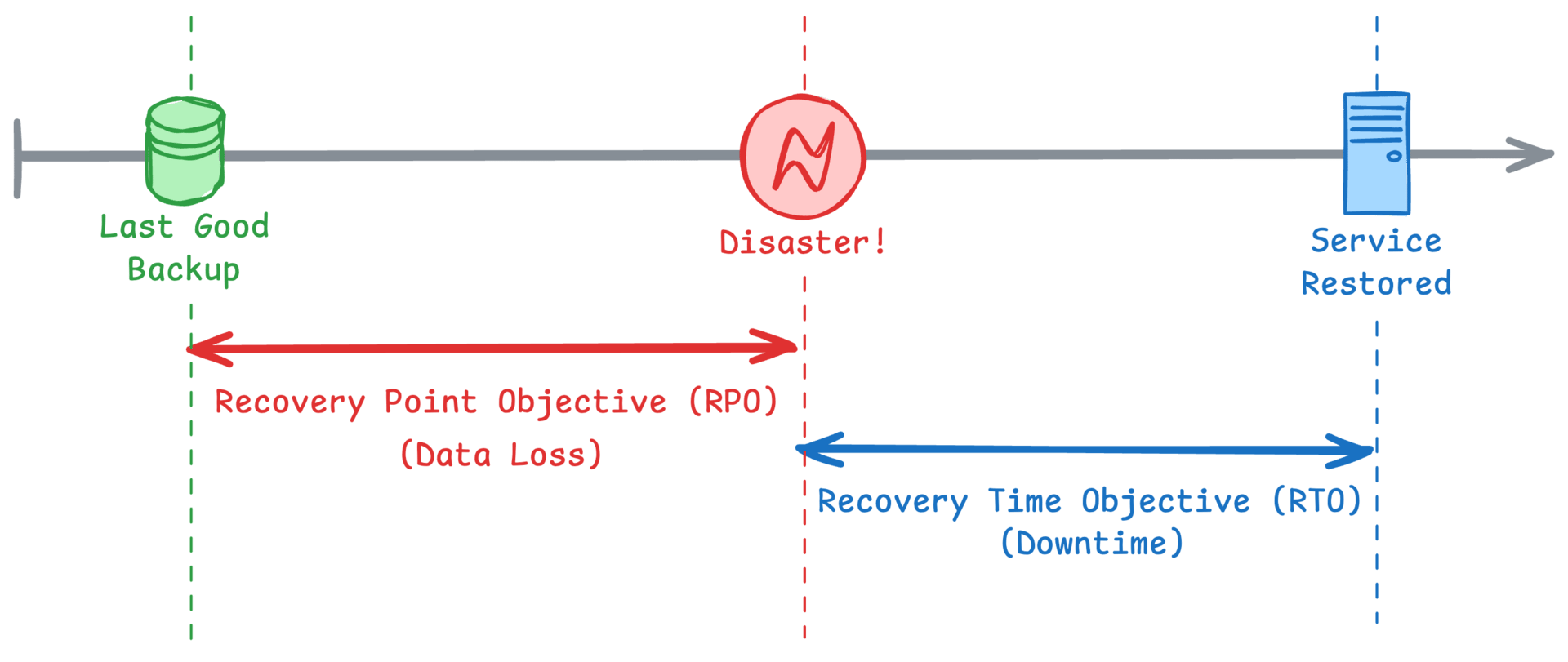

Before we dive into the strategies, we need to understand the two fundamental trade-offs in disaster recovery. Everything else is built on these two concepts:

Recovery Time Objective (RTO): How FAST do you need to be back?

This is about downtime. Is it okay for your service to be offline for a few hours, or does it need to be back in seconds? Think of it like a physical store: how long can you afford to keep the doors closed to customers?Recovery Point Objective (RPO): How much DATA can you afford to lose?

This is about data loss. If your system goes down, are you okay with losing the last hour of data, or do you need to recover every single transaction up to the microsecond of the failure? Think of it as your store's sales records: can you re-enter a few lost tickets manually, or is losing even one a disaster?

The golden rule is this: the lower (and better) your RTO and RPO, the higher the cost and complexity. Your job is to find the right balance for each part of your system. Here's a simple way to visualize it on a timeline:

How much data loss and downtime you are willing to tolerate really depends on which system you are evaluating. Your internal analytics dashboard might have an RTO of 4 hours and an RPO of 1 hour, meaning it's okay if it's down for a bit and you lose a small amount of recent data. But your mission-critical payment processing system would probably need an RTO of less than 1 minute and an RPO of a few seconds, because every moment of downtime and every lost transaction costs real money! Customer-facing systems normally have lower RPO and RTO, since every second of downtime impacts your customers’ experience and can have a negative impact on your image and future revenue.

The Four Main DR Strategies

With RTO and RPO in mind, let's walk through the four common DR strategies. I love using analogies to make these stick, so bear with me and my little examples 🤓

1. Backup and Restore

This is the most common and straightforward approach. In a few words, what you do here is you regularly take snapshots of your data (like a database backup or a disk image) and store them somewhere safe and cheap, like Amazon S3 Glacier or Google Coldline Storage. If disaster strikes, you provision new infrastructure and "restore" from that backup.

It’s like keeping a copy of your important documents in a safe deposit box across town. It's very secure, but should you actually need them, it takes time to go to the bank, get the documents, and bring them back.

As already mentioned, each strategy has its pros and cons. For the backup and restore, we can think of the following:

Pros: By far the cheapest option. It’s also simple to set up.

Cons: Highest RTO (it can take hours to provision and restore) and highest RPO (you might lose hours of data since your last backup). Also, it might not be as easy to restore as you might first expect, since a simple backup and restore might be missing minor infrastructure elements or configuration changes that were done to the live systems (IaC to the rescue!)

When to use: Because of its high RTO and RPO, this strategy is not recommended for production systems. It’s perfect for development environments, non-critical services, or systems where you can tolerate significant downtime and some data loss, but that’s as far as I’d go in terms of using it.

2. Pilot Light

This is where things get more interesting. With the Pilot Light strategy, you replicate your data in real time to a DR region. The core infrastructure needed to run the service (like the database) is kept running, but the main application servers are turned off. In a disaster, you "turn on" the servers, scale them up, and point your traffic to the new region. Sounds a bit too abstract? For me too 💥 So let’s dive a bit more into how it would work by breaking it down by layer:

The Data Layer (The Pilot Light): Think of your database in the DR region as the constant, burning pilot light. It is always on and running. It acts as a live replica, constantly receiving a stream of updates from your main production database. It isn't serving any application traffic; its only job is to sit there and keep itself perfectly in sync, ready for the moment it's needed.

The Application Layer (The Rest): This is where the major cost savings happen. The infrastructure for your application servers is fully configured, but it’s scaled down to a bare minimum: often to zero running instances or replicas. For example, in AWS, your Auto Scaling Group would be defined but have its "desired count" set to 0. In Kubernetes, your

Deploymentmanifest would be applied, but set toreplicas: 0. All the configuration is there, but you aren't paying for constantly running application servers.

When a disaster strikes, the "ignition" process is triggered:

Scale-Up: You update the configuration to scale up your application servers from 0 to the required number (for example, set the desired count in the ASG to 10).

Warm-Up: The cloud platform automatically provisions and starts these servers.

Traffic Switch: Once the new servers are running and healthy, you make a DNS or load balancer change to route all user traffic to this now-active DR region.

In terms of pros and cons for the Pilot Light, we can think of the following:

Pros: Much faster recovery time (lower RTO) than Backup and Restore. Relatively low cost since your application fleet is off.

Cons: More complex to set up and maintain. You're paying for the Pilot Light resources running in the DR region, and you do need to set up a reliable workflow to make sure that the data is correctly synchronized between the regions.

When to use it: This strategy provides a great middle ground for important but not mission-critical applications where a few minutes to an hour of downtime is acceptable. Since the restoration process still requires some manual work (scaling resources, redirecting traffic), there is still some noticeable downtime to end users, so this is not suited for highly critical production systems.

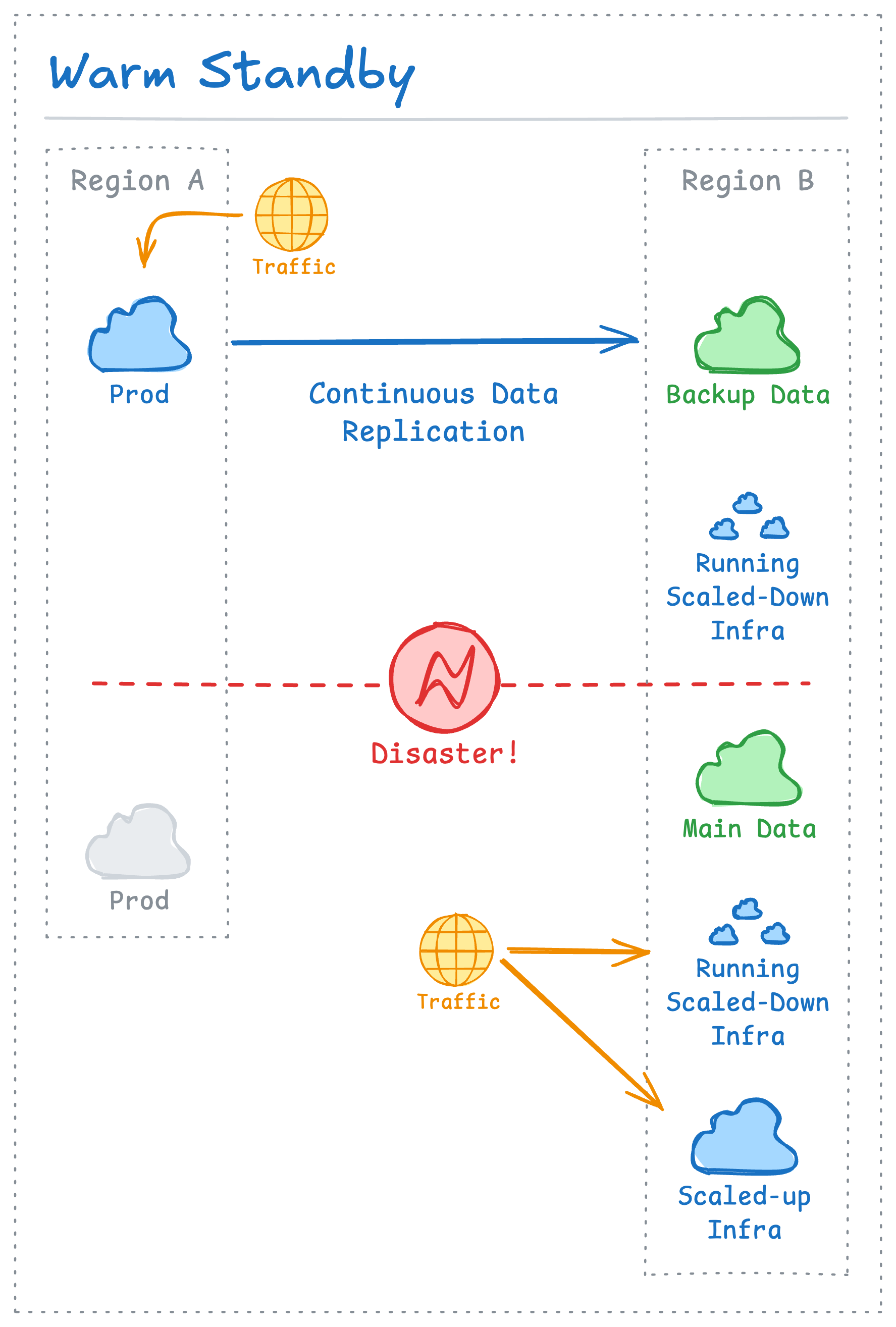

3. Warm Standby

A Warm Standby is a scaled-down but fully functional version of your production environment running in the DR region. Data is replicated in real-time, and the servers are on and running - they just aren't at full production scale. During a failover, all you have to do is switch traffic over and quickly scale up the resources to handle the full load.

Think of it as a backup generator for a shopping mall (I was about to write for your home, but really… who has a backup generator at home??). The generator is always running and ready, so when the power goes out, it kicks in automatically and can run the essentials (but not everything). The analogy fails a bit to capture the fact that, while you can’t scale a generator, you can scale your cloud resources to meet the production requirements.

In terms of pros and cons:

Pros: Very low RTO and RPO, in the scale of seconds to minutes. The failover process can be very fast. Actually, if you automate failover based on health checks (and you have effective health checks in place), it will happen as soon as production instances are deemed unhealthy.

Cons: More expensive than Pilot Light because more resources are actively running. It can also be quite complex to keep the DR environment in sync, especially when infrastructure changes beyond scaling operations happen.

When to use it: This is probably where your core business applications would fall. It’s ideal for systems where downtime is measured in thousands of dollars per minute, but some minimal outage is still acceptable.

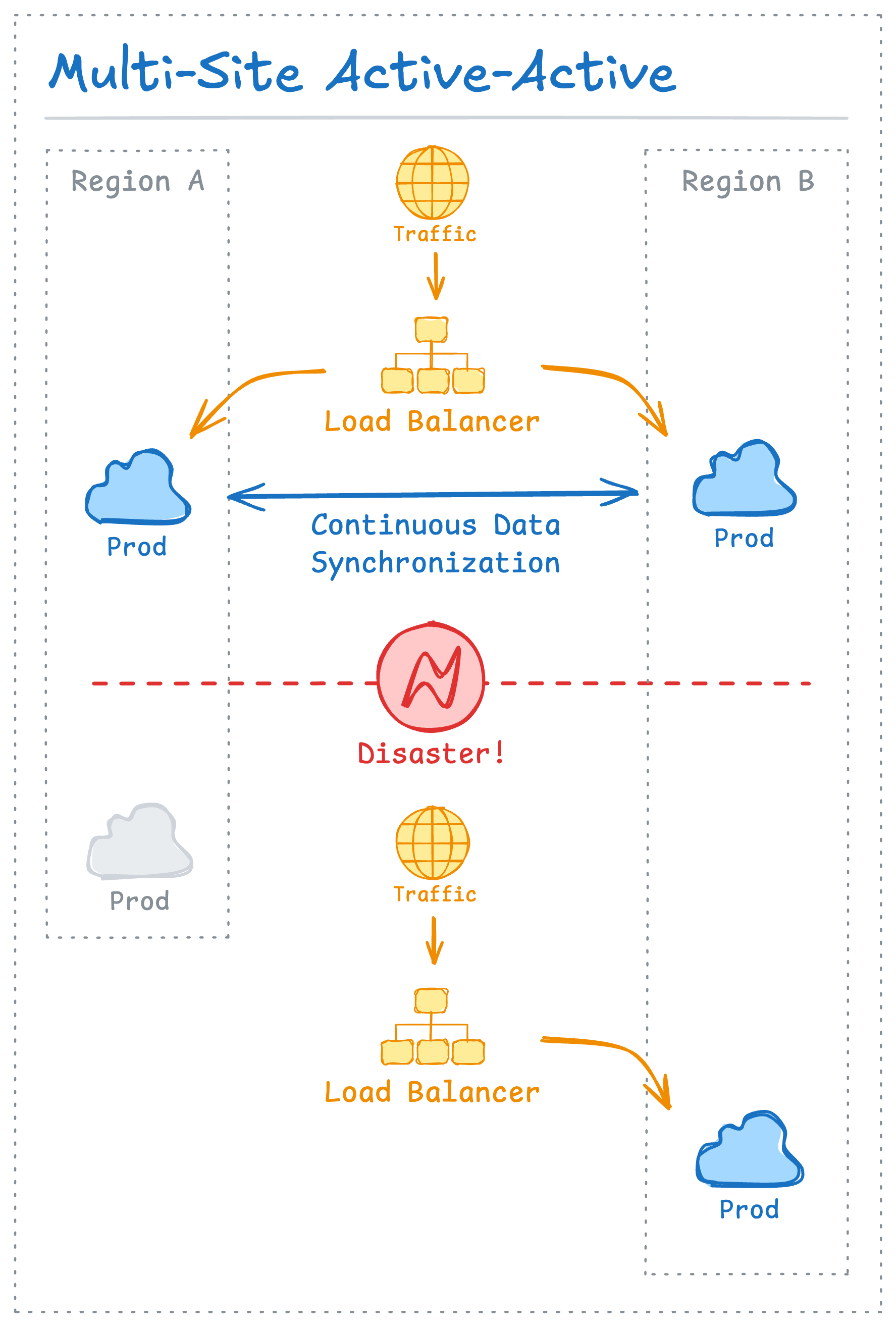

4. Multi-Site Active-Active

This is the gold standard of availability. In this setup, you have two or more fully independent, production-scale environments running in different regions. A global load balancer distributes traffic between them. If one region fails, the other is already handling a portion of the load and simply takes over the rest seamlessly.

Think of a retail chain with two fully staffed stores in the same city. If one has to close for an emergency, customers are already used to going to the other, and there’s no interruption in service.

Here are some pros and cons of the Multi-Site Active-Active strategy:

Pros: Near-zero RTO and RPO. Failover can be instant and often invisible to users.

Cons: This is definitely the most expensive and complex. You are effectively doubling your infrastructure cost. Since data writes happen in both regions, it does require sophisticated engineering to manage data consistency across sites.

When to use it: Because of its complexity, this strategy is probably overkill for most systems. That being said, it can be the right choice for absolute mission-critical services that cannot go down, like payment processing systems or authentication services.

Choose the Right Tool for the Job

As you can see, disaster recovery isn't a one-size-fits-all problem. It’s a spectrum, ranging from the cheap-and-slow "safe deposit box" to the expensive-and-instant "twin city."

The goal isn't to use Active-Active for everything. The goal is to look at each component of your system, understand its unique RTO and RPO requirements, and choose the most appropriate and cost-effective strategy for the job.

I hope this helps demystify the topic a bit! It’s a fascinating area where smart engineering can provide huge business value.

What DR strategies are you using today? I'd love to hear about your experiences or any disaster recovery stories you have to share. Just hit reply and let me know!

🎉 That's a wrap!

Thanks for reading this edition of Ctrl+Alt+Deploy. Found these insights valuable? Share this newsletter with fellow developers and let me know which story resonated with you most!

Until next time, keep coding and stay curious! 💻✨

💡 Curated with ❤️ for the developer community